How do advanced GPU chipsets improve computing power?

Last week I watched a friend render a 4K animation that would have taken his old setup three days to complete. His new machine? Forty-seven minutes. This genuinely fascinated me—the difference wasn’t RAM or CPU cores or some exotic cooling system. It was the GPU chipset doing what modern silicon does best: turning impossibly complex math into something that just works.

But here’s what most people miss about GPU performance gains, and this honestly frustrates me when I see tech reviews that focus on the wrong metrics. It’s not just about bigger numbers or more transistors crammed into the same space, though that certainly helps. The real magic happens in the architecture itself, where engineers have fundamentally reimagined how computation should flow through silicon pathways.

Your CPU is hitting a wall

Think of your CPU as a brilliant chess grandmaster, methodically considering each move with surgical precision. Sequential tasks? Absolutely flawless. But ask it to calculate lighting effects for 2 million pixels simultaneously, and you’ve basically asked that grandmaster to play 2 million chess games at once while juggling flaming torches.

Not exactly optimal.

GPUs slice through this limitation like a hot knife through butter. Instead of one genius-level thinker, they deploy thousands of simpler workers who attack problems from every angle simultaneously. When you’re training an AI model that needs to process matrices with millions of parameters, each parameter demanding its own computational attention, parallel processing isn’t just helpful. It’s the difference between possible and impossible.

Architecture that nobody talks about at dinner parties

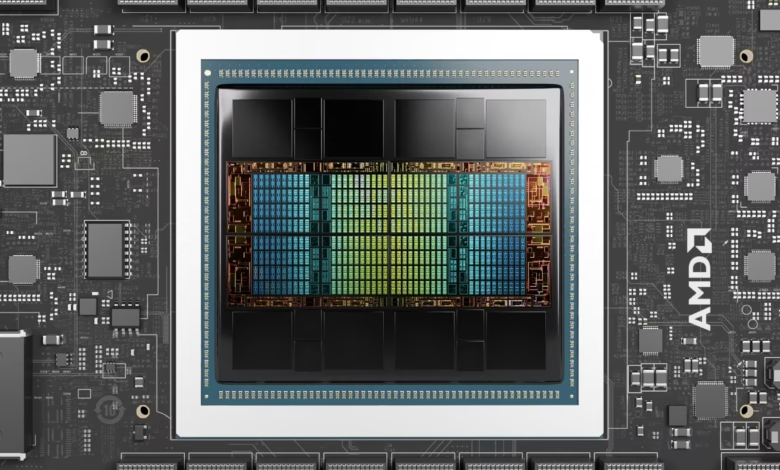

Can we take a moment to appreciate how radically different modern GPU chipsets have become? The NVIDIA AD102 GPU chipset represents something that would have seemed like science fiction even five years ago. The jump isn’t linear, it’s architectural, fundamental, almost philosophical in its approach to computation.

These chips include dedicated tensor cores that speak AI’s native language. While regular GPU cores handle graphics rendering with graceful efficiency, tensor cores are purpose-built for the matrix operations that drive neural networks. They don’t just accelerate machine learning. They transform it from a slow, grinding process into something fluid and responsive.

Ray tracing acceleration tells a similar story. Instead of approximating how light behaves in virtual environments, basically educated guessing, modern GPUs actually trace individual light rays in real time. The visual difference hits you like stepping from a dimly lit room into brilliant sunlight.

Memory bandwidth: the unsung hero

Processing power without adequate memory bandwidth is like having a Ferrari with bicycle tires. Advanced GPU chipsets pair their computational improvements with memory systems that move data at speeds measured in terabytes per second.

Terabytes. Per second.

Let that sink in.

GPT-4 weighs in at 1.7 trillion parameters. Even smaller commercial models often harbor billions of parameters, each requiring constant feeding and care. Loading these computational behemoths into memory and keeping them satisfied demands memory systems that our predecessors couldn’t have imagined.

Where theory crashes into reality

I’ve seen people obsess over core counts and clock speeds, completely missing the forest for the trees. A friend in medical imaging shared something that still gives me chills. Her team can now process MRI scans in real time during surgery, revealing blood flow and tissue changes as surgeons work.

Previously? Hours of post-procedure analysis, when decisions needed to be made in seconds.

Real-time medical imaging demands computational resources that would make a supercomputer from the 1990s weep. You’re transforming three-dimensional data through complex filtering algorithms and rendering results fast enough for human perception to process meaningfully. This transcends mere computing power, it requires the precise kind of computing power, architecturally optimized for these specific mathematical operations.

Film studios pioneered this territory because movie rendering is genuinely insane. A single Pixar frame can devour hours of traditional processing time. Twenty-four frames per second across a 90-minute film? The computational requirements spiral into astronomical territory.

Unexpected connections

Here’s where things get interesting, though. The same GPU acceleration that brings animated characters to life now powers autonomous vehicle training and protein folding research. The technology animating realistic hair movement also helps researchers understand how COVID-19 proteins interact with potential treatments.

Which makes sense, actually. Both involve complex physical simulations operating under similar mathematical principles.

The real question

Advanced GPU chipsets don’t just accelerate existing processes. They crack open entirely new possibilities. Voice assistants that genuinely understand context instead of pattern-matching keywords. Image recognition systems that spot cancer in medical scans with superhuman accuracy. These aren’t incremental improvements, they’re qualitative leaps that become feasible only when computational horsepower reaches critical thresholds.

So the real question isn’t whether advanced GPU chipsets improve computing power. Obviously they do, and spectacularly so. The question that keeps me awake at night is simpler and more profound:

What becomes possible when you suddenly have orders of magnitude more processing capability at your disposal?

Because honestly? We’re only beginning to find out.